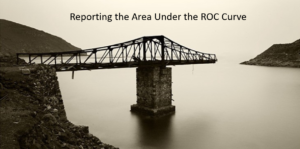

U of Michigan study: Epic’s sepsis predictive model has “poor performance” due to low AUC, but reporting AUC is like building a bridge half way over a river. How to finish the job.

The Epic Sepsis Model (ESM) is a widely used proprietary predictive model that was described as having poor performance in a validation paper by Wong et al, citing low area under the ROC curve (AUC). But the evaluation failed to specify the intended protocol in which the ESM was intended to be used, and failed to include estimates of the outcomes of that protocol compared to usual care.