In an excellent article in New York Times Magazine, Jeneen Interlandi reported on her interviews with leaders of CDC’s new $200M Center for Forecasting and Outbreak Analytics (CFA), and provides context regarding the challenges to infectious disease modeling in general. But Ms. Interlandi and the CFA leaders that were interviewed missed a really import point. Infectious disease modeling should not be about forecasting. It should be about decision-support.

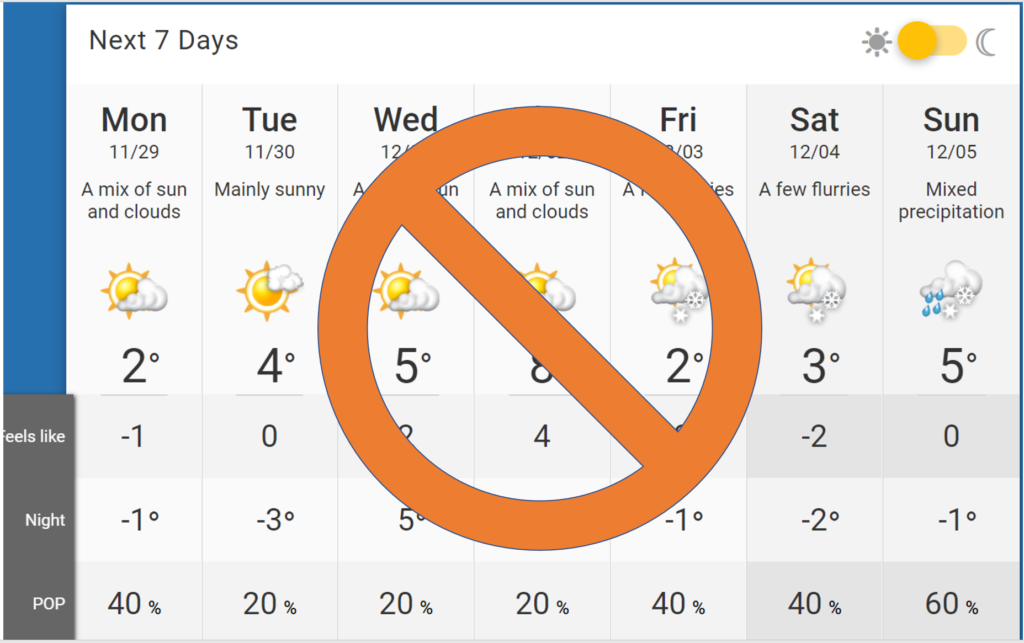

I agree with CDC’s aspirations to centralize or at least proactively coordinate infectious disease modeling efforts and to invest more resources in such efforts. I understand CDC’s desire to legitimize that goal and to persuade Congress to allocate the funding by pointing to the precedent of nationally-funded and centralized weather forecast modeling done by the National Weather Service as part of the National Oceanic and Atmospheric Administration (NOAA). I have used the weather modeling metaphor myself many times. The Reward Health web site home page includes an image showing the paths of hurricanes approaching the East Coast predicted by an ensemble of weather forecasting models.

But the weather forecasting metaphor that has so often been cited among pandemic modelers has had the unfortunate consequence of causing people to think that the purpose of infectious disease models should be to produce “forecasts.” Journalists and cable TV pundets describe pandemic models that way. Experienced academic modelers and local public health officials describe it that way. And now, the CDC has enshrined the “forecasting” conceptualization into the name of its new center.

But infectious disease modeling should not be about predicting when a wave will peak or when hospitals will run out of beds. That is a fool’s errand. The complexity and uncertainty of the dynamics of disease transmission and human behavioral responses makes it difficult to make precise estimates of the timing and location of outbreak events. Furthermore, in a politically heated environment, making such time and location predictions that turn out to be inaccurate plays into bad faith efforts by political actors to discredit public health officials, clearing the way for their misinformation. Even if investment in improved modeling could render such forecasts more accurate in a reasonable period of time, such an investment would be a distraction from the real need, which is to support better decision-making regarding the selection among alternative candidate interventions and policies for containment, mitigation, treatment and prevention. Decision-makers need to decide how to prioritize vaccinations, whether booster shots are worthwhile, what travel restrictions are necessary, what activities to restrict or shut-down, when to require masking, what types of changes to ventilation systems are worthwhile, which treatments should be used, etc. When supporting decisions regarding the selection among policy alternatives, the important thing is to use current-best-available information to provide explicit estimates of the differences in magnitudes of the various health and economic outcomes that are thought to be materially different across the alternatives. That process is complex and challenging, as I’ve described before here, and here. But the good news is that developing models intended to estimate outcomes differences among policy alternatives is a far easier task than predicting the exact location and timing of pandemic-related events.

CDC Should Stay in its Science Lane

Even with the support of useful models, policy decisions ultimately require tradeoffs among various health and economic outcomes. Making such trade-offs requires judgement about values and priorities, which are inherently subjective and non-scientific. Those policy decisions are made by many different authorities, including city, county and state-level political leaders and public health health officials, leaders of health care organizations, and leaders of private businesses. Therefore, the new CDC CFA should provide excellent models with transparent logic and assumptions and clear outputs, but the Center should stay in its science lane and serve up the information to support policy decision-making by the other authorities. Too often, the CDC, the NIH and numerous other federal and non-federal leaders and experts have made pronouncements that were presented as being purely “science-driven” when the decisions included non-scientific considerations that were not explicitly acknowledged. For example, early assertions that masks were not necessary were undoubtedly influenced by underacknowledged considerations regarding the inadequacy of PPE supply for health care workers. Early dismissal of aerosol transmission of SARS-CoV-2 was probably influenced by underacknowledged considerations of the economic implications of ventilation system modifications.

I’m not arguing that policy decisions should be based only on science and not based on economics or political considerations. On the contrary, it is inherently necessary for policy decision-making to include health and economic trade-offs, and such considerations include inherent subjectivity, which political processes are intended to adjudicate. My point is that centralizing more of our modeling capabilities in a federal agency does not mean that the policy decision-making authority is to be centralized, any more than centralizing weather forecast modeling means that NOAA is responsible for deciding whether to cancel an outdoor wedding due to forecasted rain. The CDC CFA should be about developing and serving up useful models and model outputs, and thereby building trustworthiness and credibility over time.

2 thoughts on “CDC’s new $200M Center for Forecasting and Outbreak Analytics mistakenly frames modeling efforts as “forecasting.” It should be all about policy decision support.”

My comment in NY Times and associated replies:

https://www.nytimes.com/2021/11/22/magazine/cdc-pandemic-prediction.html#permid=115674471

Pingback: Recent nomination of anti-science leaders for HHS and CMS is a wake-up call. We need to defend science by staying in our lane to rebuild trust and objectivity. – Reward Health

Comments are closed.